While I am glad this ruling went this way, why’d she have diss Data to make it?

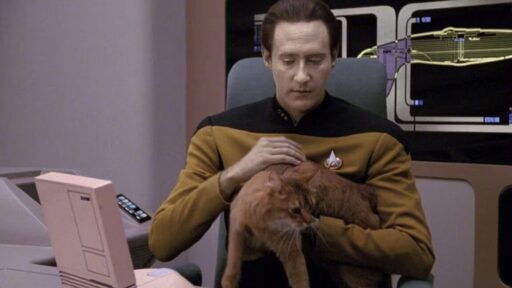

To support her vision of some future technology, Millett pointed to the Star Trek: The Next Generation character Data, a sentient android who memorably wrote a poem to his cat, which is jokingly mocked by other characters in a 1992 episode called “Schisms.” StarTrek.com posted the full poem, but here’s a taste:

"Felis catus is your taxonomic nomenclature, / An endothermic quadruped, carnivorous by nature; / Your visual, olfactory, and auditory senses / Contribute to your hunting skills and natural defenses.

I find myself intrigued by your subvocal oscillations, / A singular development of cat communications / That obviates your basic hedonistic predilection / For a rhythmic stroking of your fur to demonstrate affection."

Data “might be worse than ChatGPT at writing poetry,” but his “intelligence is comparable to that of a human being,” Millet wrote. If AI ever reached Data levels of intelligence, Millett suggested that copyright laws could shift to grant copyrights to AI-authored works. But that time is apparently not now.

So, I will grant that right now humans are better writers than LLMs. And fundamentally, I don’t think the way that LLMs work right now is capable of mimicking actual human writing, especially as the complexity of the topic increases. But I have trouble with some of these kinds of distinctions.

So, not to be pedantic, but:

Couldn’t you say the same thing about a person? A person couldn’t write something without having learned to read first. And without having read things similar to what they want to write.

This is kind of the classic chinese room philosophical question, though, right? Can you prove to someone that you are intelligent, and that you think? As LLMs improve and become better at sounding like a real, thinking person, does there come a point at which we’d say that the LLM is actually thinking? And if you say no, the LLM is just an algorithm, generating probabilities based on training data or whatever techniques might be used in the future, how can you show that your own thoughts aren’t just some algorithm, formed out of neurons that have been trained based on data passed to them over the course of your lifetime?

People do this too, though… It’s just that LLMs do it more frequently right now.

I guess I’m a bit wary about drawing a line in the sand between what humans do and what LLMs do. As I see it, the difference is how good the results are.

I would do more research on how they work. You’ll be a lot more comfortable making those distinctions then.

I’m a software developer, and have worked plenty with LLMs. If you don’t want to address the content of my post, then fine. But “go research” is a pretty useless answer. An LLM could do better!

Then you should have an easier time than most learning more. Your points show a lack of understanding about the tech, and I don’t have the time to pick everything you said apart to try to convince you that LLMs do not have sentience.

“You’re wrong, but I’m just too busy to say why!”

Still useless.

It might surprise you to know that you’re not entitled to a free education from me. Your original query of “What’s the difference?” is what I responded to willingly. Your philosophical exploration of the nature of intelligence is not in the same ballpark.

I’ve done vibe coding too, enough to understand that the LLMs don’t think.

https://arstechnica.com/science/2023/07/a-jargon-free-explanation-of-how-ai-large-language-models-work/

Sure, I’m not entitled to anything. And I appreciate your original reply. I’m just saying that your subsequent comments have been useless and condescending. If you didn’t have time to discuss further then… you could have just not replied.

deleted by creator

lol, yeah, I guess the Socratic method is pretty widely frowned upon. My bad. =D

Even a human with no training can create. LLM can’t.

The only humans with no training (in this sense) are babies. So no, they can’t.